AI-Powered Email Draft Generator

Designing an Agentic AI Assistant Framework for enterprise customer service at — a US fintech record-keeper managing 50+ client solutions.

Context & Challenge

Slow, Manual Email Responses Draining Agent Productivity

A leading US fintech record-keeper managing over 50 client solutions, faced a critical bottleneck in their customer service operations. Each support agent spent 4 to 10 minutes per email response — manually reviewing client records, checking email thread history, and composing replies. With thousands of daily interactions, this created significant operational drag.

The process was fragmented across multiple Salesforce screens, client databases, and email threads with no unified view. Agents were losing productive time on repetitive, information-gathering tasks before they could even begin drafting a response.

Agents handled queues across 50+ product lines with an average response time of 4–10 minutes — too slow for SLA targets.

Agents switched between 3–5 Salesforce screens, client databases, and email threads to gather context before composing a single reply.

A previous agency had created an initial AI draft concept, but it needed significant improvement in UX, information architecture, and scalability.

The solution had to work as a native Salesforce plugin using Lightning Web Components, respecting existing agent workflows.

adjustMy Brief

Improve the existing design through user research, redesign the interaction model, and deliver a production-ready Salesforce plugin UI that could scale beyond email drafting into a broader AI assistant framework.

Key Decisions

Strategic Choices That Shaped the Product

These are the critical decisions I made during the engagement — the bridge between the impact result and the detailed evidence below.

| Decision | What I Chose | Why | Tradeoff |

|---|---|---|---|

| Scope expansion | Evolved from email-only tool to 4-capability framework | Research revealed email drafting was only one slice of the agent productivity problem | Longer timeline, more complex system architecture |

| Integration approach | Hybrid chatbot (Scenario 1) with roadmap to full integration | Minimal disruption, faster validation; organization wasn't ready for full AI replacement | Dual-system maintenance in the short term |

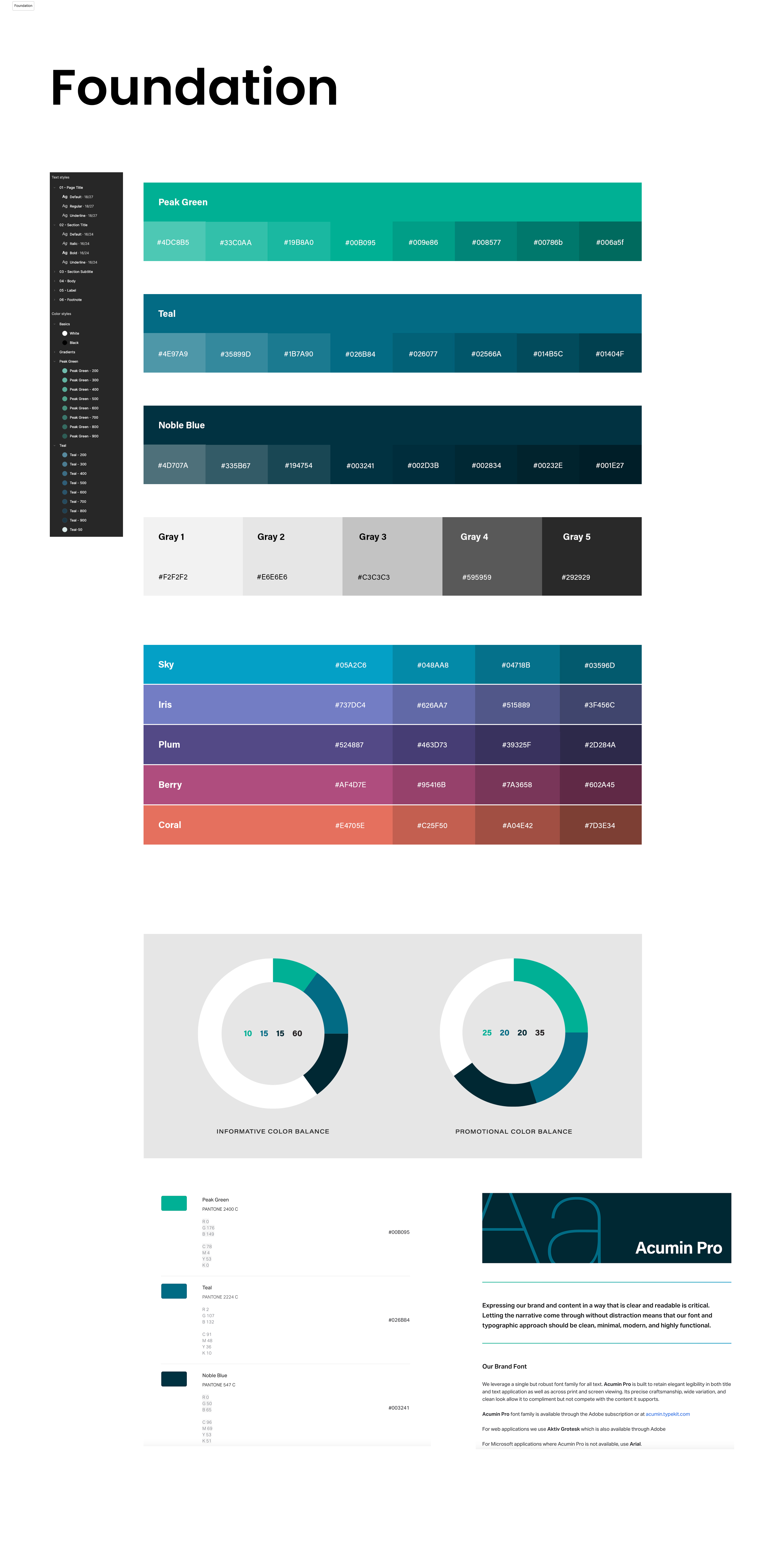

| Design system strategy | Built Foundation Tokens on top of Salesforce SLDS | Ensured visual consistency with existing Salesforce environment; enabled rapid iteration | Less creative freedom than a custom system |

| Architecture model | Three-phase extensible framework | New capabilities could be added without reworking existing architecture | Higher upfront design investment |

Discovery & Research

Understanding Agent Reality

Rather than starting from scratch, I began by auditing the previous agency's design deliverables and analyzing recordings from end-user workshops. This gave me a fast baseline — but also revealed significant gaps in information architecture and scalability thinking.

I then conducted contextual interviews with CS agents to map the email response workflow end-to-end, catalogued every Salesforce screen agents used during their daily work, and defined email response templates mapped to common case types and agent personas.

Research Snapshot

Design Audit

Previous agency deliverables + workshop recordings

Identified gaps in IA and scalability — original design was single-purpose with no framework thinking.

Agent Interviews

Contextual interviews with CS agents

Agents spent 60%+ of response time gathering context, not composing replies. Screen-hopping was the #1 pain point.

Salesforce Screen Inventory

Full catalogue of agent-used screens

Identified consolidation opportunities — much of the data agents needed lived in 3–5 separate views that could be unified.

Template Definition

Common case types × agent personas

Mapped email response templates to case categories, enabling structured LLM prompting with domain context.

Salesforce Sales Console — standard lead view and navigation structure

Case detail view — the fragmented layout agents navigated for every response

System Design

From Email Tool to Agentic AI Assistant Framework

Research revealed that the email draft tool addressed only one slice of a much larger agent productivity challenge. The scope expanded to include four core capabilities unified under a single interaction model.

AI Email Draft Generator

LLM-powered draft responses using case context, client history, and email thread data from the Data Lake.

Ticket Creation

Streamlined case and ticket creation directly from the assistant interface, eliminating context-switching.

Data Pull

On-demand retrieval of client records, case history, and account details surfaced contextually within the panel.

Playbook Guidance

Step-by-step workflow guidance powered by Panviva, delivering structured resolution paths for complex cases.

Agentic Workflow

Case Trigger

- Email arrives in queue

- Case auto-created

- Agent assigned

AI Processing

- Data Lake pull

- Context assembly

- LLM draft generation

- Compliance check

Agent Review

- Review AI draft

- Edit / approve

- Pull additional data

- Consult playbook

Output

- Send response

- Create ticket

- Log activity

- Close / escalate

Three-Phase Architecture

I designed a scalable framework structured in three phases — extensible so new capabilities could be added without architectural rework.

Phase 1

Workflow Creation & Admin Center

- Structured step-by-step workflows via Panviva

- Role-based admin permissions

- Workflow usage and KPI tracking

- Knowledge asset repository with governance

Phase 2

User Interaction Layer

- Search and recommendation engine in Salesforce

- AI email draft with compliance guardrails

- Chatbot integration (Hybrid + Full scenarios)

- Unified activity log for case context

Phase 3

Orchestration & Processing Layer

- Workflow matching and retrieval engine

- Contextual data processing (cases, profiles, history)

- Escalation logic for unmatched queries

- Integration layer: Panviva + Salesforce + Chat

description Decision Memo

Chatbot Integration Strategy

Existing chatbot continues handling routine queries; a dedicated AI Knowledge Assistant is introduced for workflow guidance. Minimal disruption, modular deployment.

The chatbot evolves into a unified assistant consolidating all capabilities. Single interaction point, but requires greater organizational readiness.

Design Hypothesis: AI Assistant Framework — three-phase architecture from admin workflows to orchestration layer

System Design

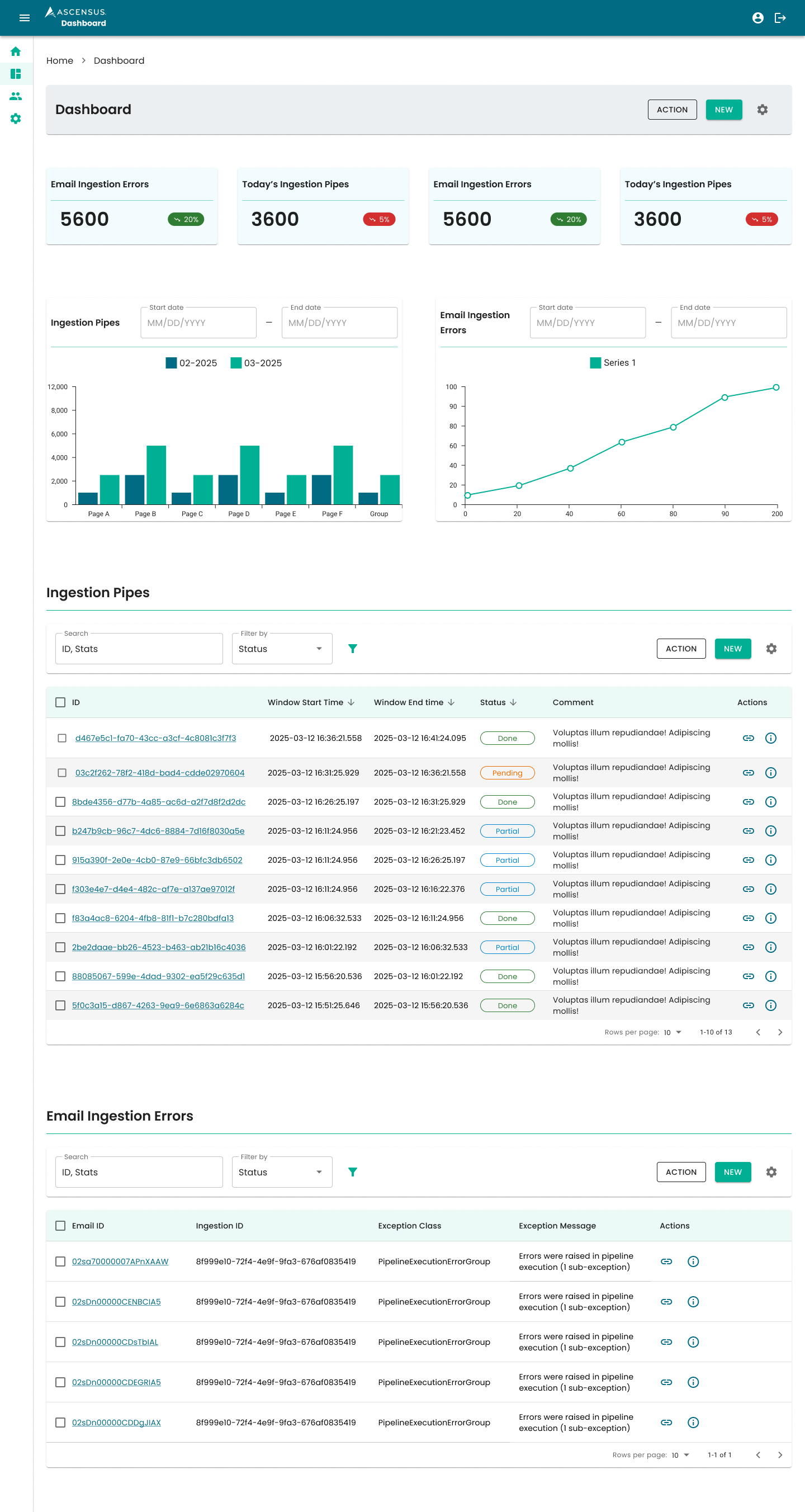

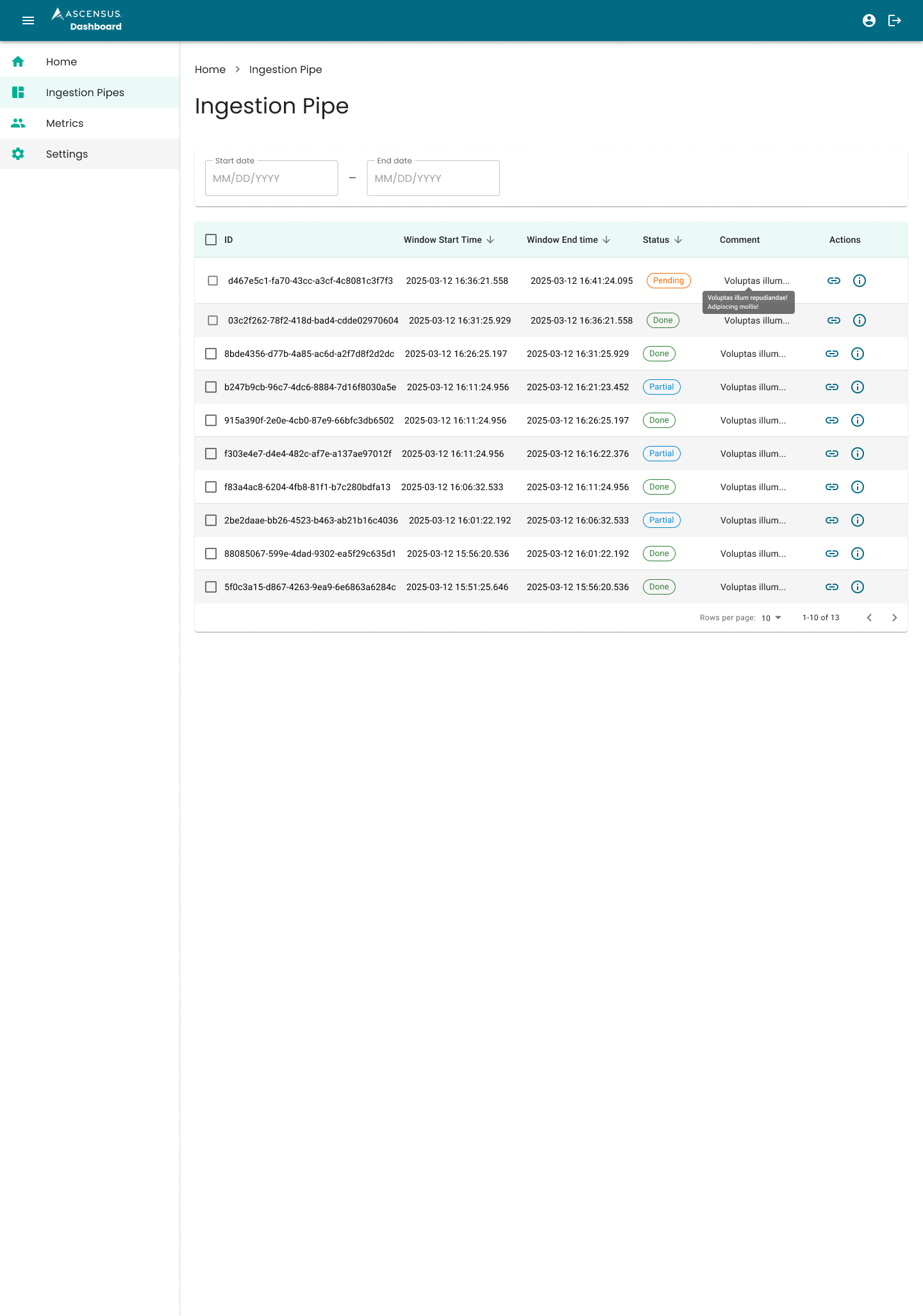

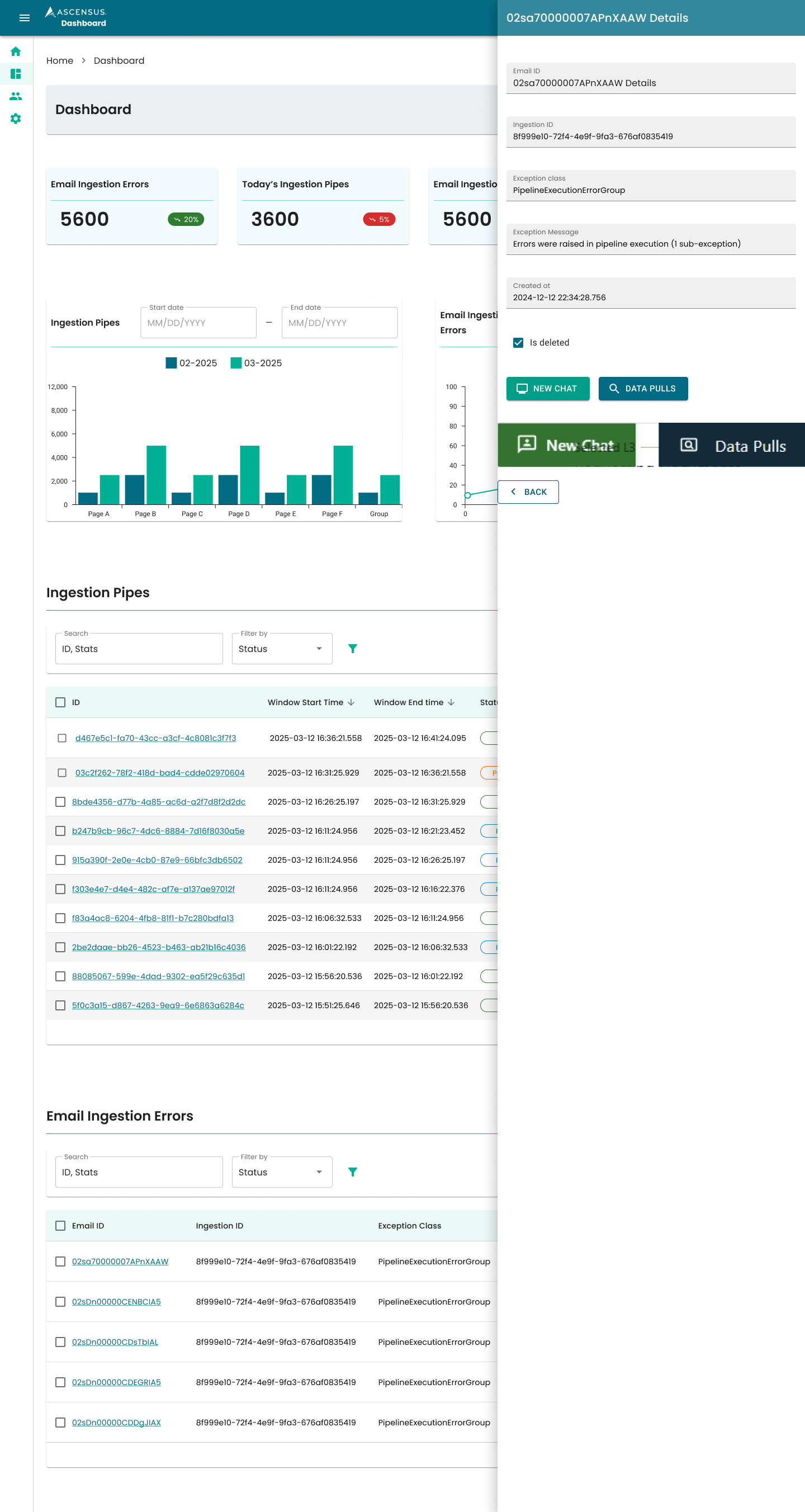

Ingestion Pipeline Dashboard

As part of the Data Lake integration, I designed a monitoring dashboard for engineering and support teams to track data ingestion health — critical for ensuring the AI assistant had accurate, up-to-date data.

- Home Dashboard — KPI cards (error counts, pipe totals) with trend indicators and dual chart views for ingestion volumes and error patterns

- Ingestion Pipes Table — Sortable by ID, window times, and status with inline comment preview and action links to New Relic / Traceloop

- Email Ingestion Errors — Linked to ingestion IDs with exception class details, full error messages, and deletion status tracking

- Detail Panel — Slide-out panel showing complete error context: email ID, ingestion ID, exception traceback, and creation timestamp

The dashboard followed the Agent Assist design system principles. I created two iterations: a consolidated single-page layout and a navigation-separated version with dedicated pages per data type. Both included KPI summaries, trend visualizations, and drill-down detail panels. Secured via SecureAuth / Active Directory with an unpublished route for initial rollout.

Consolidated single-page dashboard — KPI summary cards, trend charts, ingestion pipes and error tables

Navigation-separated view — Ingestion Pipes table with status filters

Slide-out detail panel — complete error context with exception traceback

Design Evolution

Iterating Toward the Right Interaction Model

Foundation Token System

Built a Foundation Token system and UI kit based on Salesforce Lightning Design System (SLDS) components, ensuring visual consistency and rapid iteration across all design variants. This allowed the team to prototype multiple interaction models efficiently without starting from scratch each time.

Foundation Token System — color palette, typography scale, and brand guidelines

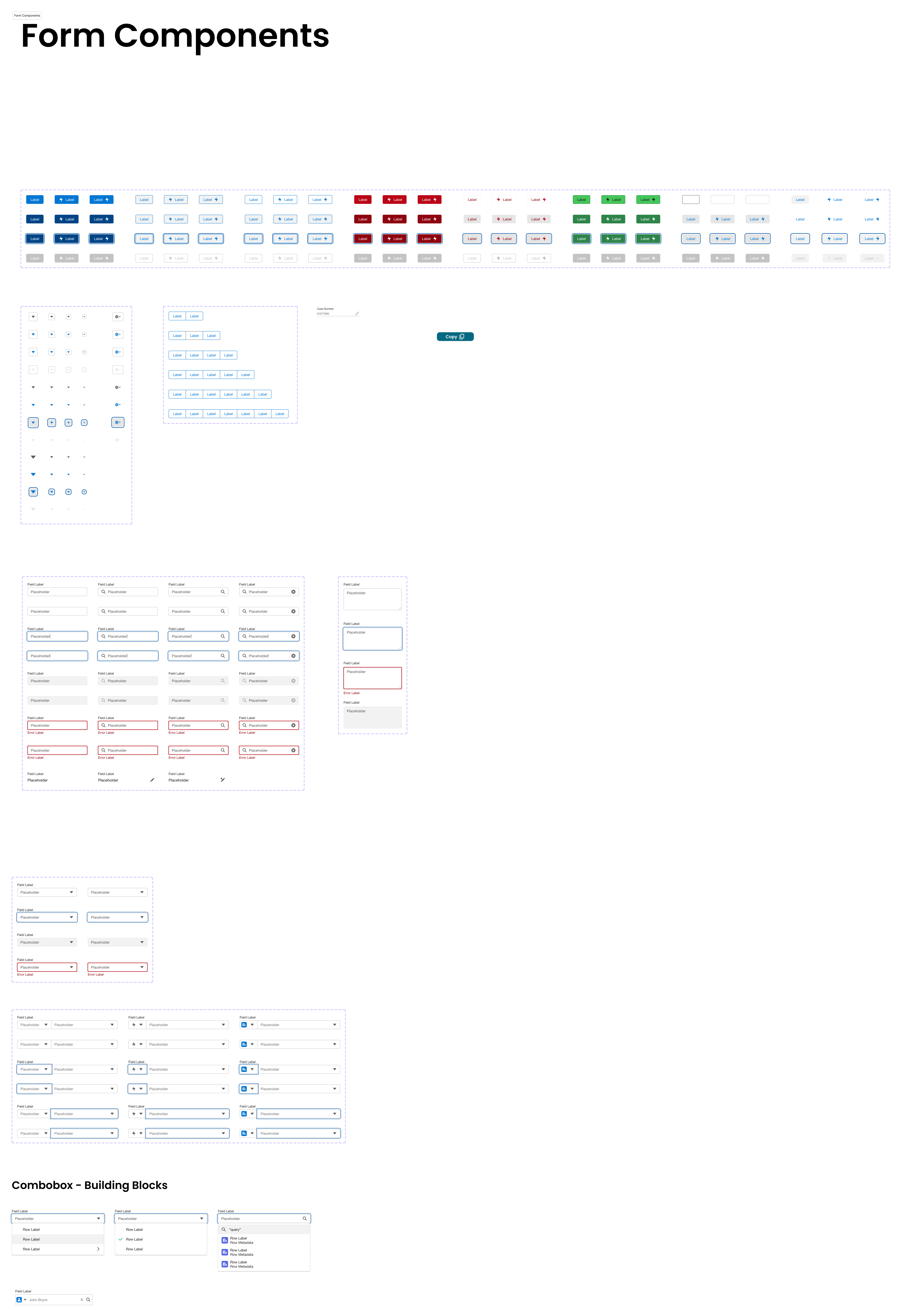

UI Kit — form components, badges, inputs, and selectors extending Salesforce Lightning Design System

Iterative Design Versions

Produced multiple design versions exploring different interaction patterns for the AI draft experience. Each version was validated through stakeholder workshops and direct user feedback sessions with CS agents. The design evolved through continuous refinement cycles incorporating both qualitative feedback and operational constraints.

Design Progression

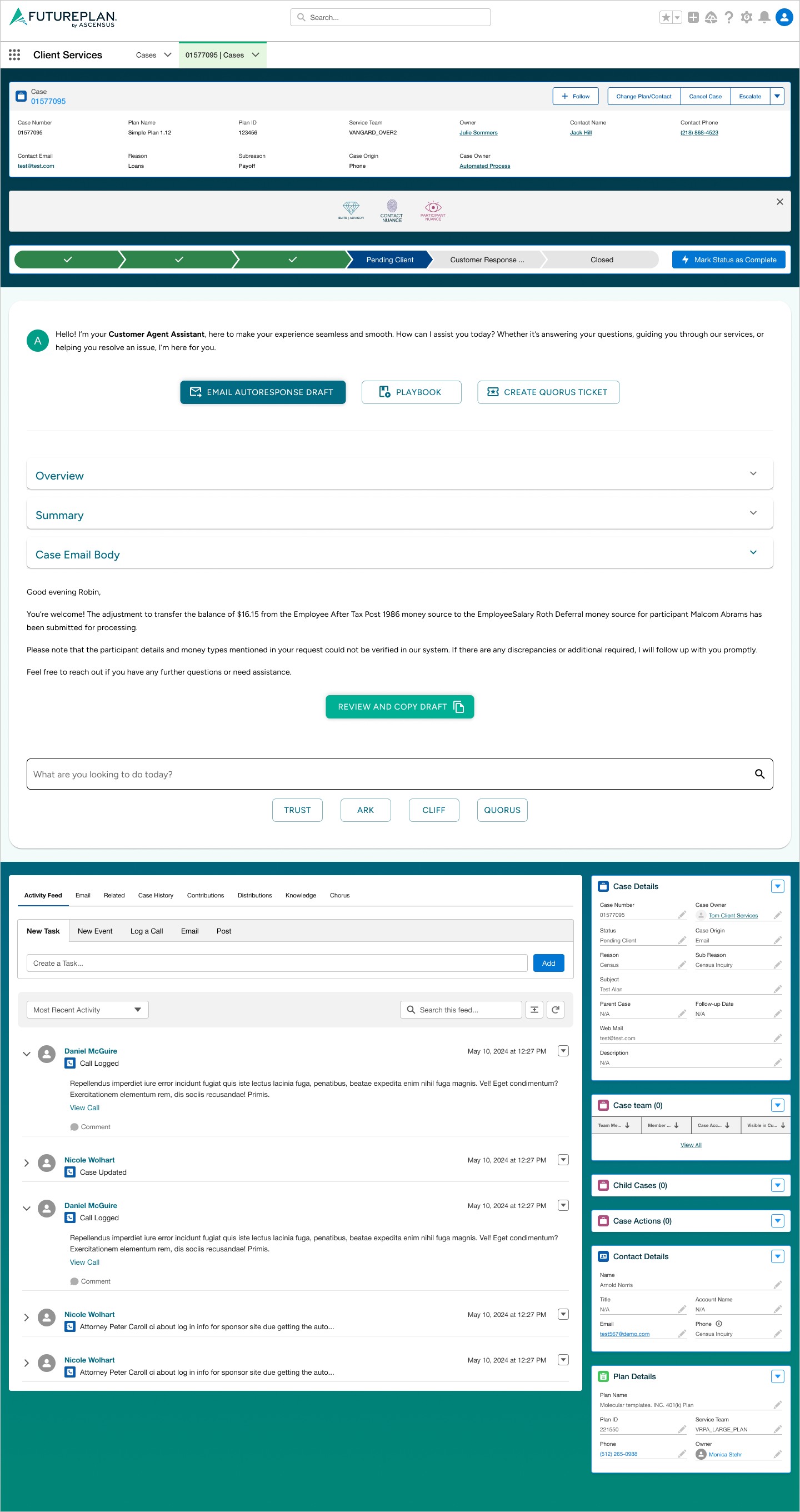

Three key iterations show how the interaction model evolved — from a linear summary-and-draft layout to a sidebar-navigated assistant, and finally to a streamlined unified panel with collapsible sections.

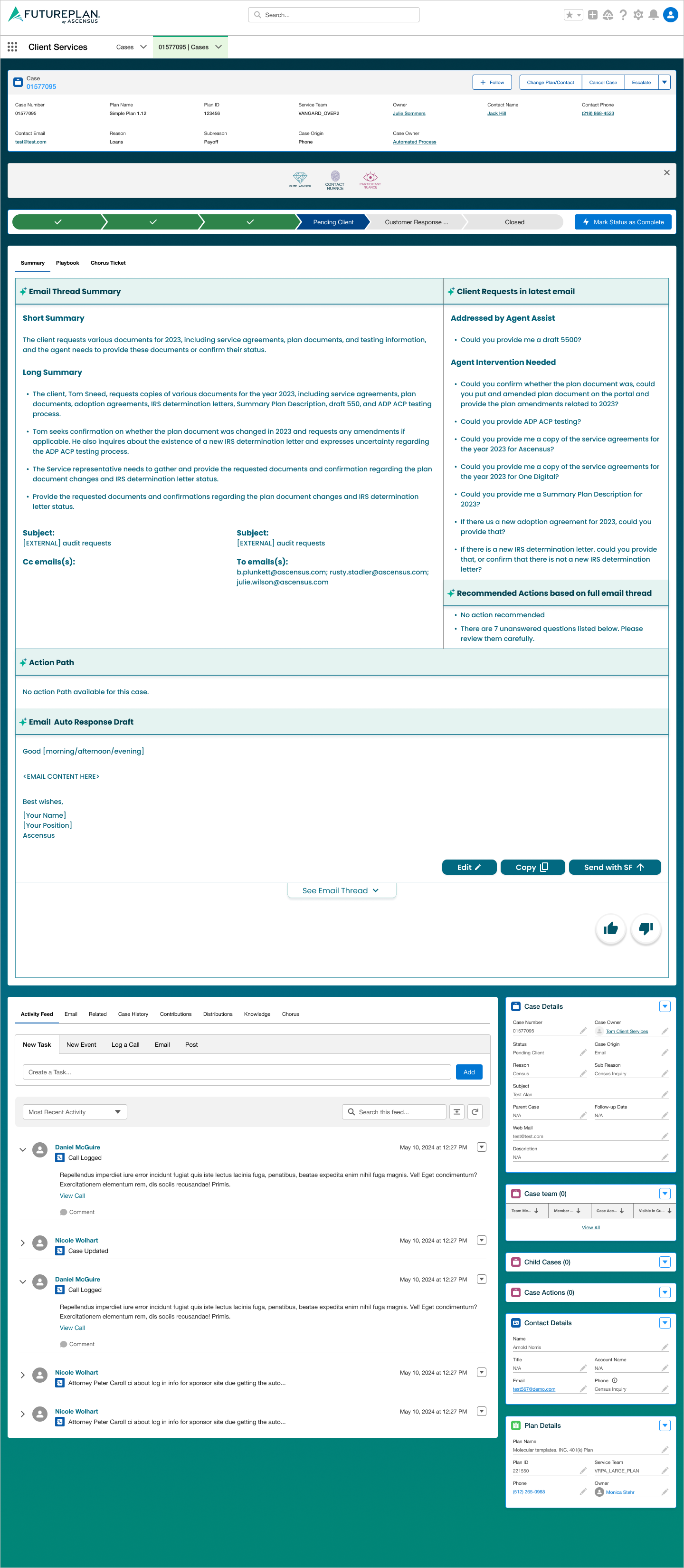

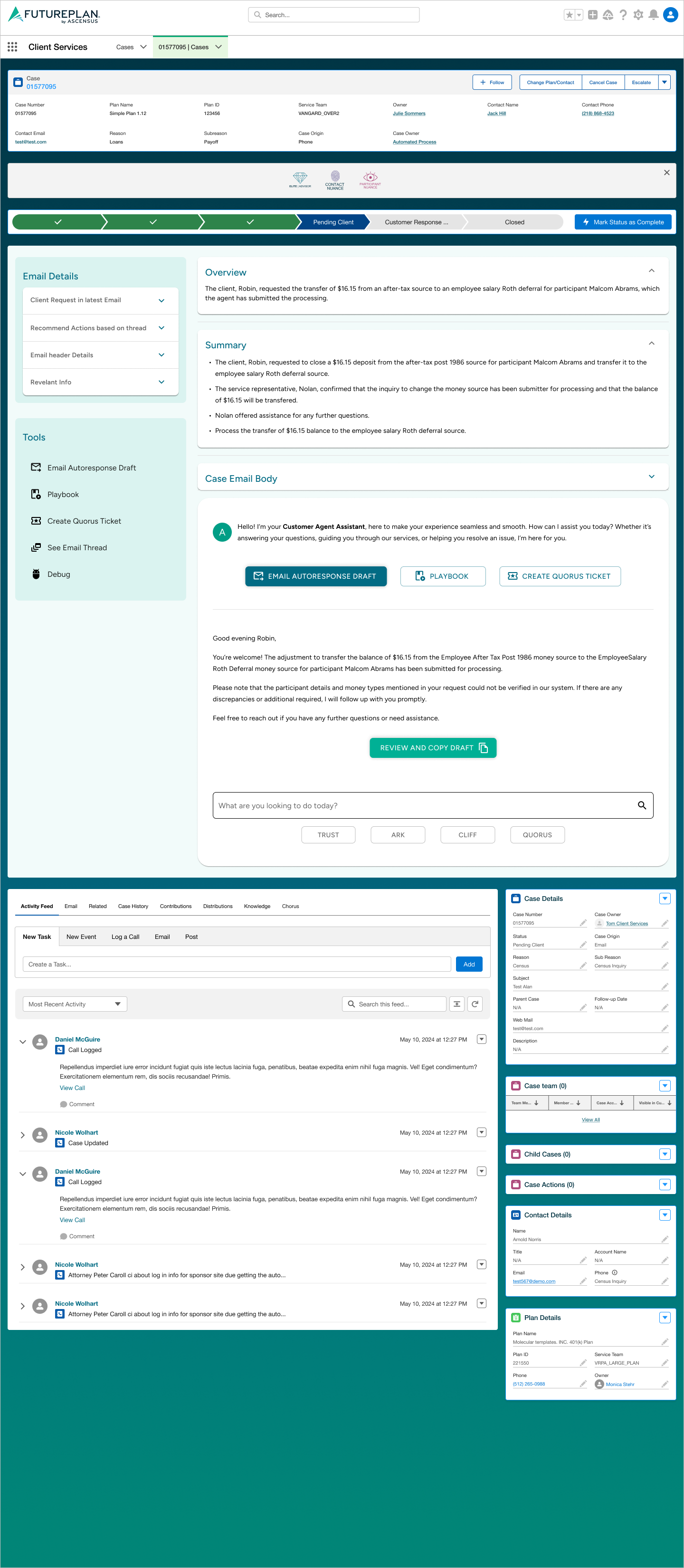

Version 1 — Linear layout: email summary, thread analysis, and draft generation embedded in the case view

Version 2 — Sidebar navigation: Email Details + Tools panel with chatbot-style interaction and multiple capabilities

Version 3 (Final) — Unified panel: collapsible sections, prominent action buttons, and streamlined chatbot input

Validation

Usability Testing & Stakeholder Alignment

The unified chatbot product — combining email draft, playbook, data pull, and ticket creation — underwent usability testing sessions with the customer service agents who would use it daily.

person_checkAgent Testing

- Task-based usability tests

- Agents across product lines

- Observed pain points

- Direct feedback sessions

groupStakeholder Workshops

- Feature prioritization

- Scope alignment

- Architecture validation

- Roadmap consensus

syncIteration Cycles

- Qualitative feedback loops

- Operational constraint checks

- Technical feasibility reviews

- Progressive refinement

lightbulbKey Finding

Agents strongly preferred having all capabilities accessible from a single panel rather than switching between tools. The AI draft generation was the highest-value feature, but the contextual data pull was the most frequently used — it eliminated the manual screen-hopping that consumed the majority of agent time.

Results & Impact

Measurable Outcomes

Email Response Time

75–90%

faster

Context Gathering

Single View

unified

Draft Generation

~30s

per draft

Product Scope

4x

scope expansion

Deliverables

Reflections